InstaCart has recently open sourced anonymized data on customer orders for its Kaggle competition. You can find out more info from the link below.

https://www.kaggle.com/c/instacart-market-basket-analysis

Previously, I did some data exploration to discover some interesting insights. See my previous blog post.

Next I would like to use their anonymized data to build a product recommender system. There are many approaches/strategies in product recommendations, eg. Most popular items, Also bought/viewed, Featured items, and etc. I am going to explore the most popular items approach and collaborative filtering (also bought) approach.

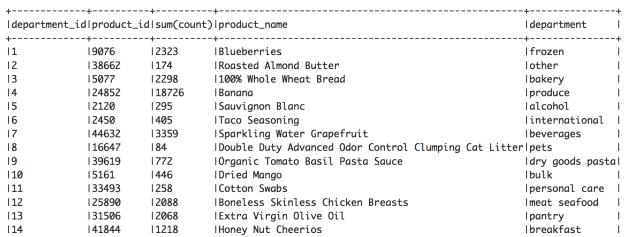

We can identify the top 5 popular items for each department and utilize them for most popular items recommendation. For example, the top 5 items for frozen department are Blueberries, Organic Broccoli Florets, Organic Whole Strawberries, Pipeapple Chunks, Frozen Organic Wild Blueberries.

Next, I utilized Spark ALS algorithm to build a collaborative filtering based recommender system.

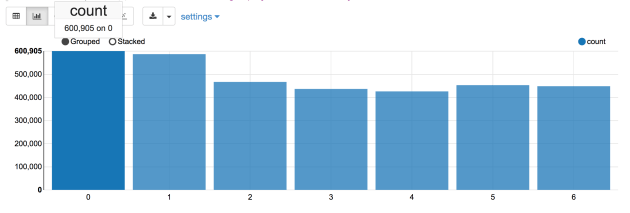

Some stats about the data

Total user-product pair (rating): 1,384,617

Total users: 131,209

Total products: 39,123

Given the data, I splitted the data into train set (0.8) vs test set (0.2) randomly. This resulted in

Number of train elements: 1,107,353

Number of test elements: 277,264

Here are the parameters I used in ALS, rank = 10, lambda = 1, number of iterations = 10, 50, 60, 80

- Number of iterations = 10, RMSE = 0.9999895

- Number of iterations = 50, RMSE = 0.9999828

- Number of iterations = 60, RMSE = 0.9999875

- Number of iterations = 80, RMSE = 0.9999933

We can also try out different ranks in grid search.

Here are some recommendations suggested by ALS collaborative filtering algorithm (number of iterations =80, rank=10)

Given user 124383 Transaction History

+——-+———-+—–+———-+—————————+——–+————-+

|user_id|product_id|count|product_id|product_name |aisle_id|department_id|

+——-+———-+—–+———-+—————————+——–+————-+

|124383 |49478 |1 |49478 |Frozen Organic Strawberries|24 |4 |

|124383 |21903 |1 |21903 |Organic Baby Spinach |123 |4 |

|124383 |19508 |1 |19508 |Corn Tortillas |128 |3 |

|124383 |20114 |1 |20114 |Jalapeno Peppers |83 |4 |

|124383 |44142 |1 |44142 |Red Onion |83 |4 |

|124383 |20345 |1 |20345 |Thin Crust Pepperoni Pizza |79 |1 |

|124383 |27966 |1 |27966 |Organic Raspberries |123 |4 |

+——-+———-+—–+———-+—————————+——–+——————+

Here are the recommendations

+———-+————————————————————-+——–+————-+

|product_id|product_name |aisle_id|department_id|

+———-+————————————————————-+——–+——————+

|28717 |Sport Deluxe Adjustable Black Ankle Stabilizer |133 |11 |

|15372 |Meditating Cedarwood Mineral Bath |25 |11 |

|18962 |Arroz Calasparra Paella Rice |63 |9 |

|2528 |Cluckin’ Good Stew |40 |8 |

|21156 |Dreamy Cold Brew Concentrate |90 |7 |

|12841 |King Crab Legs |39 |12 |

|24862 |Old Indian Wild Cherry Bark Syrup |47 |11 |

|37535 |Voluminous Extra-Volume Collagen Mascara – Blackest Black 680|132 |11 |

|30847 |Wild Oregano Oil |47 |11 |

+———-+————————————————————-+——–+————-+